Choosing Your AI Provider

In 2026, developers have more options than ever for building AI chatbots. OpenAI's GPT-5, Anthropic's Claude, Google's Gemini, and open-source models like LLaMA 4 each have their strengths. For most projects, we recommend starting with OpenAI's API for its excellent documentation and developer experience.

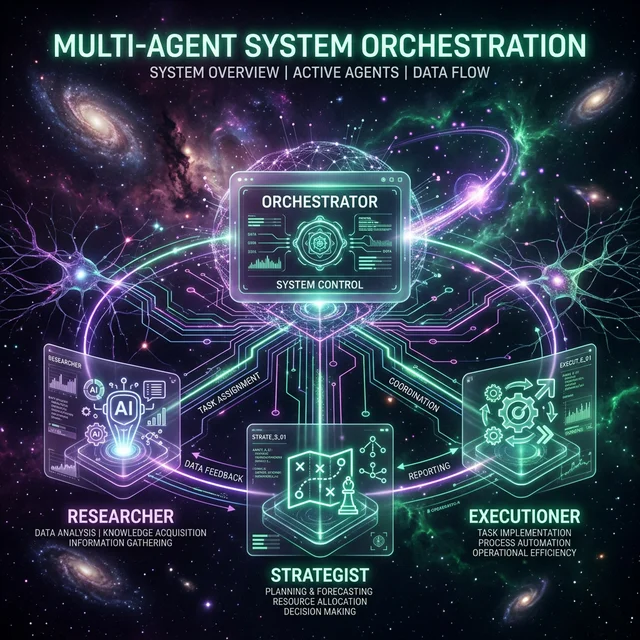

Architecture Overview

A modern AI chatbot typically consists of four components: a frontend interface, an API backend, a vector database for RAG (Retrieval Augmented Generation), and the LLM itself. We'll use Next.js for the frontend, Express.js for the backend, Pinecone for vector storage, and OpenAI's API.

Setting Up Retrieval Augmented Generation (RAG)

RAG allows your chatbot to answer questions about your specific data. The process involves chunking your documents, generating embeddings, storing them in a vector database, and retrieving relevant chunks at query time to provide context to the LLM.

Building the Interface

Your chatbot interface should support streaming responses (for a better UX), message history, typing indicators, and error handling. We'll use Server-Sent Events (SSE) for streaming and React state management for the chat flow.

Deployment and Monitoring

Deploy your chatbot using Vercel for the frontend and Railway for the backend. Set up logging with LangSmith to monitor conversations, track token usage, and identify areas for improvement. Always implement rate limiting and input validation for production deployments.

Join FuturEdge to share your thoughts on this article.